OpenAI committed more than $20 billion to lease servers powered by Cerebras chips on April 17, 2026. The same day, Cerebras filed for an IPO on Nasdaq at a target valuation of approximately $25 billion. Those two facts, announced simultaneously, are not a coincidence.

The structure of the deal

This is not a standard procurement contract. OpenAI signed a three-year agreement to buy compute capacity from Cerebras, as first reported by The Information. If total spending reaches $30 billion, warrants convert into up to 10% of Cerebras equity. On top of that, OpenAI is extending Cerebras $1 billion as a working-capital deposit for data center construction, which it books as an asset on its own balance sheet rather than an expense.

Read that last part again. OpenAI is financing the infrastructure it then leases back to itself, and counting the loan as an asset. It is not buying chips from a supplier. It is capitalizing a supplier it will partially own.

The deal started smaller. In January 2026, OpenAI and Cerebras signed an initial agreement worth more than $10 billion. By April, the commitment had doubled.

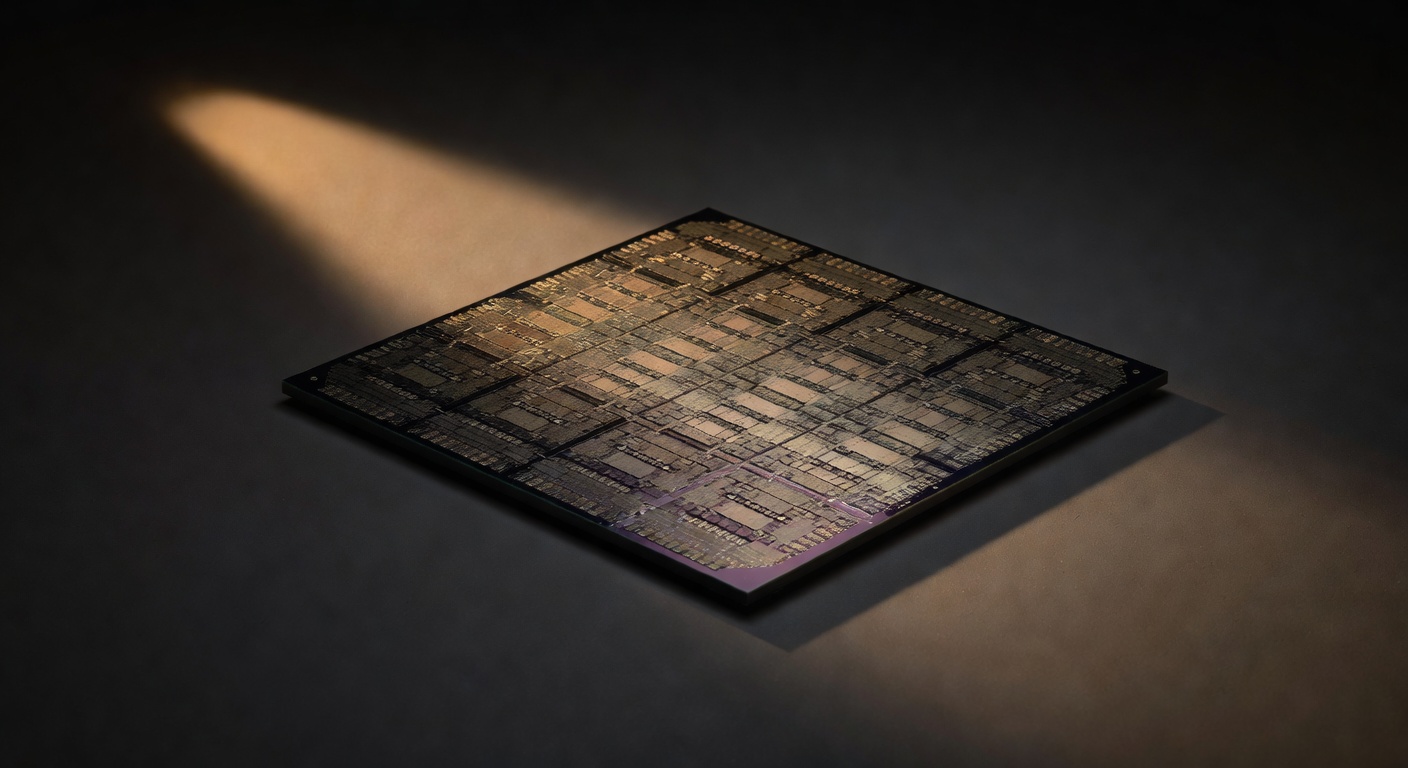

The chip itself

Cerebras makes one thing: the WSE-3, a processor that covers 46,225 square millimeters of silicon. It is 56 times larger than Nvidia's H100, integrates 900,000 AI cores, and carries 44GB of ultra-fast on-chip SRAM. Its design is optimized for inference, running a trained model against live queries, not for the training process that produces the model in the first place.

That distinction matters more than it sounds.

Why inference is the actual prize

For most of AI's commercial history, training was the expensive part. Building a model like GPT-4 required massive GPU clusters running for weeks. Inference, the part where a user types a prompt and gets an answer, seemed like a footnote.

It is no longer a footnote. According to PANews, inference accounted for 50% of all AI compute spending in 2025. By 2026, it is projected to reach two-thirds. Lenovo CEO Yang Yuanqing put it plainly: the structure of AI spending is flipping from "80% training, 20% inference" to "20% training, 80% inference."

Nvidia's H100 and H200 were built for training. They have memory bandwidth constraints that make inference relatively inefficient. Cerebras built its entire architecture around the opposite problem. That is why Nvidia struck a $20 billion deal for Groq's chip assets and inference IP in December 2025, structured as an asset acquisition plus a non-exclusive IP license plus an acqui-hire of Groq's senior engineers, with Groq continuing as an independent company. It is Nvidia's largest deal by value since the $6.9 billion Mellanox acquisition announced in 2019. Nvidia saw the same shift coming and moved to plug the gap.

Two $20 billion bets, placed within four months of each other, on the same piece of the market. That is not coincidence either.

A valuation that tripled in seven months

In September 2025, Cerebras was valued at $8.1 billion. By February 2026, a $1 billion Series H closed at a $23 billion post-money valuation. When the OpenAI deal was announced in April, the IPO target landed at approximately $25 billion, with a ~$2 billion raise. That is roughly a tripling in seven months, driven almost entirely by a single customer relationship.

The previous IPO attempt, filed in 2024, was withdrawn in October 2025. The reason: G42, a UAE tech group with historical ties to Huawei, accounted for 83% of Cerebras' 2023 revenue and 97% of its 2024 hardware sales. CFIUS flagged the customer concentration on national-security grounds. Cerebras converted G42 to non-voting shares, won clearance in March 2025, and entered 2026 with G42 off the investor list entirely.

OpenAI replaced one geopolitically complicated anchor customer with a less complicated one. The market that was closed is now open because the old customer was restructured out.

The parallel track with Broadcom

The Cerebras deal is not OpenAI's only move here. Simultaneously, OpenAI is working with Broadcom to develop its own custom ASIC chips, with mass production expected by the end of 2026. Cerebras secures non-Nvidia inference capacity in the near term. Custom silicon with Broadcom is the longer play toward full vertical integration.

Apple ran a version of this sequence starting in 2006. It deepened its relationship with Samsung on chip production, built internal expertise along the way, then developed its own M-series silicon and walked away from the dependency entirely. OpenAI is not at that last step. But the shape of the move is identical.

Why it matters

The conventional read on this story is that OpenAI is reducing its dependence on Nvidia. That is true, but it is the least interesting part.

The more precise read is this: OpenAI is positioning itself to own a piece of the infrastructure that runs every answer ChatGPT gives. Training a model is a one-time cost. Inference is a recurring cost that scales with every user, every query, every product built on top of the API. Whoever controls the chips that handle inference controls the margin on AI at scale.

Nvidia understood this when it acquired Groq. OpenAI is making the same bet from the other side of the table, financing a competitor into existence rather than building or buying one outright. The $1 billion working-capital deposit, structured as an asset rather than an expense, signals that OpenAI intends this to be a durable relationship with real financial stakes, not a vendor contract it can walk away from.

Prediction markets rank Cerebras among the most likely 2026 IPOs alongside SpaceX. The market read is that the OpenAI anchor relationship is real, durable, and enough to carry a public company.

Nvidia's grip on AI training was never the whole game. The game is what happens after the model ships, billions of times a day, forever.

Is OpenAI breaking a monopoly, or just building a smaller one it happens to own a piece of?

Originally published as an Instagram carousel on @recul.ai.